In the artificial intelligence area, new problems have surfaced following reports stating the Department of Defense (DoD) has placed Anthropic under potential supply chain risk. This event has created multiple discussions in the tech and defense industry, and many people are wondering what this means, why this situation has developed, and how this can impact the future of AI companies collaborating with government contracts.

In this article, we have attempted to explain the Anthropic ban case in a straightforward and simple way. We will piece together what occurred, the reasons behind the DoD’s expressed concerns, and the implications of AI safety and national defense.

The Beginning Issueof the Anthropic

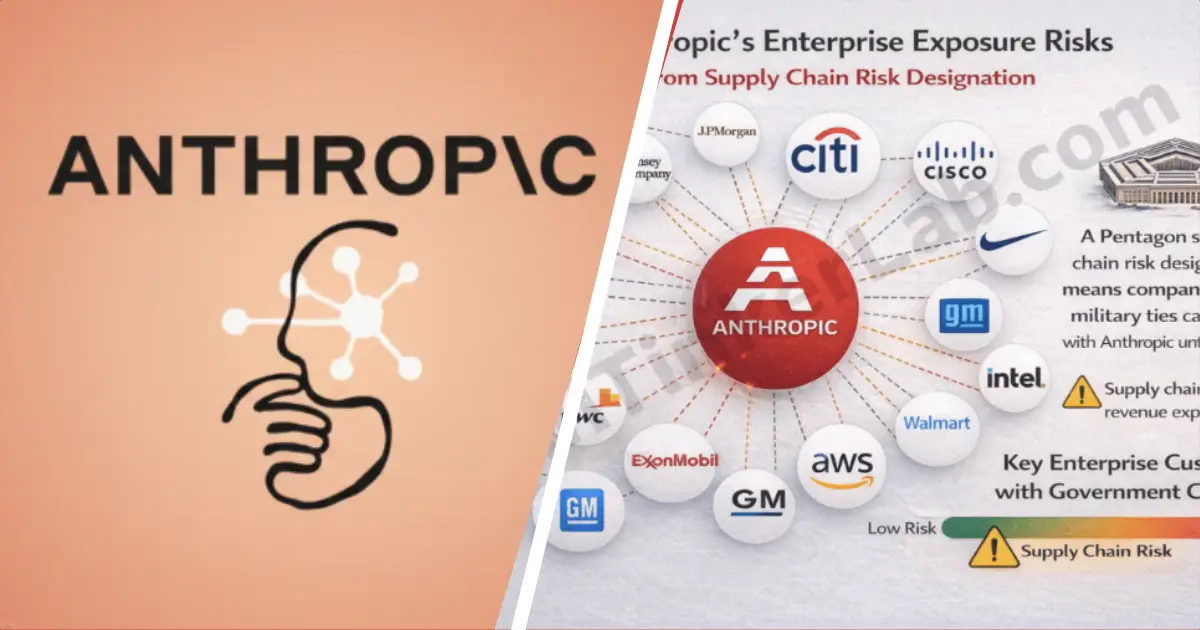

Recently, it has been reported that the United States Department of Defense has classified Anthropic as a supply chain risk. Anthropic is an artificial intelligence company that focuses on AI safety and responsible AI development, and building sophisticated AI models and advocating for the responsible governance of AI.

This decision certainly does not mean that AI development will stop. However, it does place scrutiny on how AI companies are assessed in partnership with government agencies. The term Anthropic supply chain risk has quickly emerged as a new expression trending in conversations in the tech and defense industry.

What is Supply Chain Risk?

In order for us to understand the ban of Anthropic, we have to explain what supply chain risk means. In the context of the government and the military, supply chain risk means the possible weaknesses of technology providers. These weaknesses can be of a security nature, foreign influence, or data management.

When the DoD (Department of Defense) refers to a company as a supply chain risk, it means that the company has potentially risky security issues. This can mean that a company has done something wrong or right. In the case of the Department of Defense, it is usually of a protective nature, and it means that the Department of Defense is trying to keep something secret. In most cases, this kind of information is kept for the sake of national security.

It boils down to this. The Department of Defense (DoD) intends to safeguard the companies that supply the artificial intelligence (AI) services.

What brought flags to Anthropic?

Reports indicate that the concern relates to the oversight of AI safety and the management of risk on a larger scale. The label of Anthropic supply chain risk is probably a small portion of the overall AI providers compliance review that is being done for defense related businesses.

The DoD has stepped up its oversight of artificial intelligence systems. The use of AI in planning, logistics, and cybersecurity has led to this. The government wants to control the construction and the management of these systems that are used as tools to the highest degree.

Anthropic has been critiqued in public for their focus on AI safety and responsible development. However, defense agencies may have very particular and strict policies. Small uncertainties can result in prolonged evaluations or limitations.

Viewing things from the national security lens, the scenario describes things better. Rather than respond to a developed problem, government agencies prefer to get things done proactively.

Effects of the AI Innovation and Safety

The ban on Anthropic explained discussion also raises broader concerns on regulation of AI. Many scientists think AI companies are inevitably required to achieve certain levels of technical and ethical balance. Innovation, on the other hand, can only be stifled for a limited time.

With an AI alignment and a safe model behavior emphasis, the development of responsible AI has been regarded as a supply chain risk by many.

Some analysts see this as a sign of the tightening governance of AI. Defense agencies require absolute control and transparency in the supply chain, partnership, and data governance. Safety-centric companies are equally affected.

This implies a more regulated security framework for AI companies partnering with governments. The aim is not to limit the development of AI, but to ensure the partnership on AI is easily manageable and uncomplicated to monitor.

What’s Next?

Right now, the Anthropic supply chain risk classification might lead to an indefinite ban, but that isn’t guaranteed. Labeling a company is only the beginning and there are often subsequent reviews, audits, or compliance revisions. Companies are likely to submit more documents and update their policies.

This situation is ongoing, and Anthropic and the government may have clarifying information or updates in the near future. As of now, it underscores the extent to which AI is being integrated and incorporated into defense systems.

This situation illustrates that AI safety conversations are more than just ethical. They are also about infrastructures, relational frameworks, and international security.

Impacts on the Wider Industry

The topic of explaining the Anthropic ban also indicates the changing attitude and sophistication of the world’s governments toward AI technology. AI is now viewed as the central nervous system of military, cyber defense, and other core functions of national defense, including the planning and operational execution of warfare.

This means that the provision of the technology with integrated AI systems will be subject to a much greater level of control and oversight. There will be a much greater level of control and oversight for anything that involves AI.

The primary message for technologists and businesses is that compliance and safety frameworks must be embedded within the AI systems in the earliest phases of technology development and system engineering. This will optimize future operational flexibility and stability on partnerships and collaborations.

Frequently Asked Questions

-

What is the Anthropic Ban Explained story about?

This story discusses the U.S. Department of Defense reports concerning Anthropic as a supply chain risk due to concerns related to the safety of the AI. -

Does this mean Anthropic is banned permanently?

Not really. Supply chain risk labels usually end up as reviews or audits, not bans. -

Why would the DoD flag an AI company?

The DoD pulls companies due to national security concerns, which include the protection of the data, the control of the infrastructure, and the level of transparency. -

Is this about AI safety?

It is. AI safety and transparency of the supply chain are both essential concerning defense-related partnerships. -

How does this affect the AI industry?

It shows that there is a lot of government control and oversight regarding AI companies, especially in terms of sensitive work.

Final thoughts

The Anthropic supply chain risk label is a clear indicator of how important AI governance is. The main focus of future AI partnerships will be transparency, security, and responsible innovation.

As of now, this case reminds us that new tech must comply with regulations surrounding defense systems. The dialogue surrounding AI safety is more alive, more sophisticated, and more crucial than ever.